The post Using SQL Assertions to Enforce Temporal Data Integrity appeared first on Philipp Salvisberg's Blog.

]]>More than twelve years ago, I described the multi-temporal features of Oracle Database in this blog post. In the conclusion, I wrote that I missed a temporal DML API, temporal integrity constraints, temporal joins, and temporal aggregations. These features are still missing today.

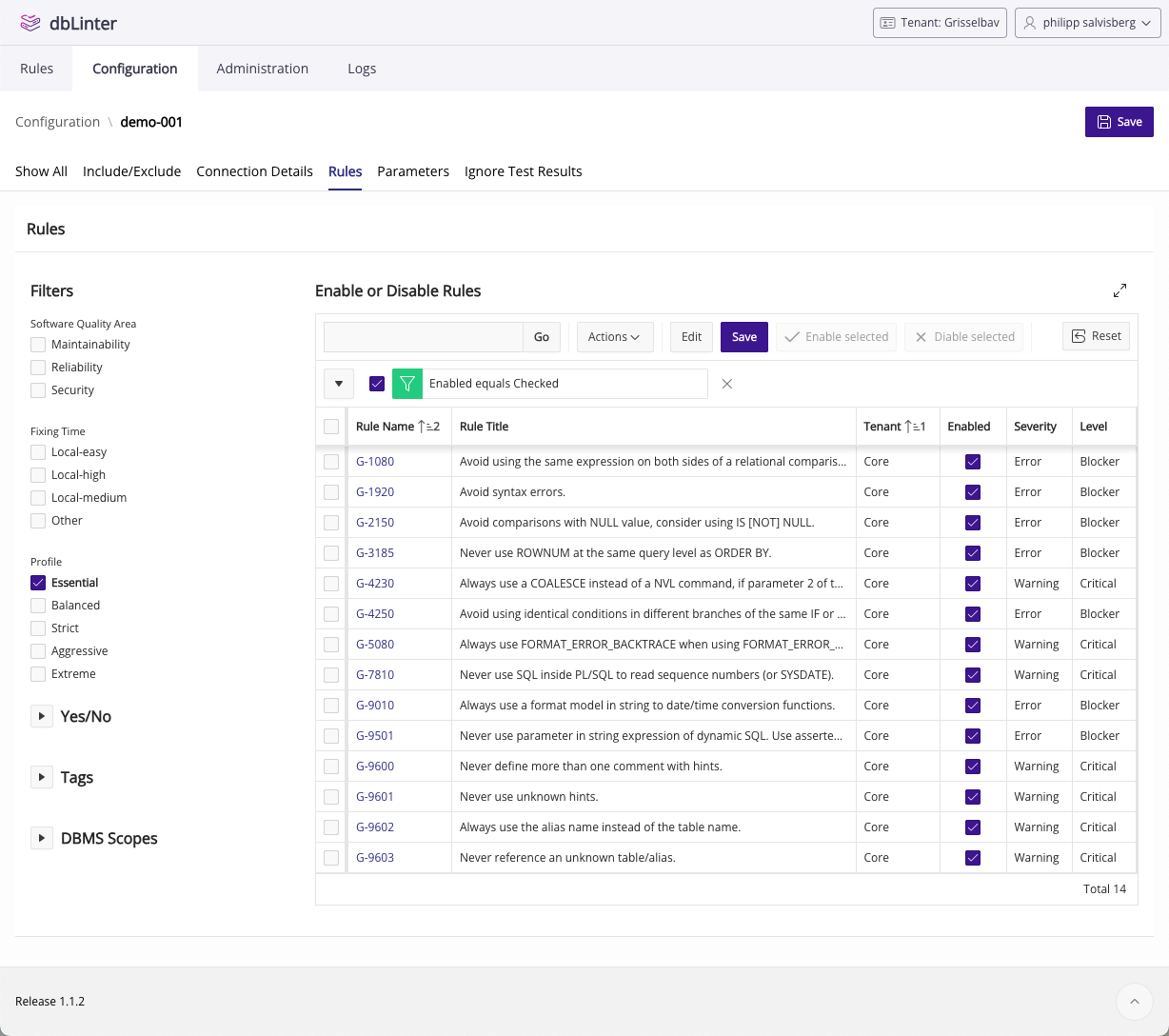

However, in the latest version of Oracle AI Database (23.26.1), SQL assertions make it possible to define temporal integrity constraints manually. In this post, I demonstrate how.

Data Model

There are various ways to model temporal data. In this post, I use the well-known non-temporal DEPT-EMP model as a basis and add a history table for each non-temporal table with a valid-time period named vt using the column vt_start for the beginning of the period and vt_end for the end of the period. Please note that Oracle Database uses half-open intervals for temporal periods and NULL for -∞ and +∞.

Non-temporal tables store the most recent version of the data, which is kept in their history tables. This simplifies access to the latest version and provides the expected performance for non-temporal data. The disadvantage is that the API must maintain redundant data. Note that the latest version is not necessarily the current version. The current version is the one whose valid-time period contains the current date and time.

This model versions all columns of the base table, potentially resulting in more rows in the history tables than necessary. Furthermore, the model does not support soft deletes. In other words, there is no is_deleted flag. This makes managing data integrity easier. No need to keep reference and structure data just because some deleted rows still reference it. In any case, this is sufficient for this blog post.

Setup

For the examples in this blog post, I used Oracle AI Database 26ai Enterprise Edition Release 23.26.1.0.0.

Connected as SYS, I ran the following script to create the user DEMO with the necessary privileges.

create user demo identified by demo

default tablespace users

temporary tablespace temp

quota unlimited on users;

grant create session to demo;

grant create table to demo;

grant create sequence to demo;

grant create assertion to demo;User DEMO created.

Grant succeeded.

Grant succeeded.

Grant succeeded.

Grant succeeded.Connected as DEMO, I ran the following script to implement the data model as outlined in the previous chapter with initial data.

create table dept (

deptno number(2, 0) not null,

dname varchar2(14 char) not null,

loc varchar2(13 char) not null,

constraint dept_pk primary key (deptno) deferrable initially immediate

);

create table dept_ht (

hist_id integer generated always as identity not null,

vt_start date null,

vt_end date null,

deptno number(2, 0) not null,

dname varchar2(14 char) not null,

loc varchar2(13 char) not null,

period for vt (vt_start, vt_end),

constraint dept_ht_pk primary key (hist_id),

constraint dept_ht_uk unique (deptno, vt_start) deferrable initially immediate,

constraint dept_ht_dept_fk foreign key (deptno) references dept deferrable initially immediate,

constraint dept_ht_vt_ck check (vt_start is null or vt_end is null or vt_start < vt_end) deferrable initially immediate

);

create index dept_ht_dept_fk_i on dept_ht (deptno);

create table emp (

empno number(4, 0) not null,

ename varchar2(10 char) not null,

job varchar2(9 char) not null,

mgr number(4, 0),

hiredate date not null,

sal number(7, 2) not null,

comm number(7, 2),

deptno number(2, 0) not null,

constraint emp_pk primary key (empno) deferrable initially immediate,

constraint emp_emp_fk foreign key (mgr) references emp deferrable initially immediate,

constraint emp_dept_fk foreign key (deptno) references dept deferrable initially immediate

);

create index emp_dept_fk_i on emp(deptno);

create index emp_emp_fk_i on emp(mgr);

create table emp_ht (

hist_id integer generated always as identity not null,

vt_start date null,

vt_end date null,

empno number(4, 0) not null,

ename varchar2(10 char) not null,

job varchar2(9 char) not null,

mgr number(4, 0) null,

hiredate date not null,

sal number(7, 2) not null,

comm number(7, 2) null,

deptno number(2, 0) not null,

period for vt (vt_start, vt_end),

constraint emp_ht_pk primary key (hist_id),

constraint emp_ht_uk unique (empno, vt_start) deferrable initially immediate,

constraint emp_ht_emp_empno_fk foreign key (empno) references emp deferrable initially immediate,

constraint emp_ht_emp_mgr_fk foreign key (mgr) references emp deferrable initially immediate,

constraint emp_ht_dept_fk foreign key (deptno) references dept deferrable initially immediate,

constraint emp_ht_vt_ck check (vt_start is null or vt_end is null or vt_start < vt_end) deferrable initially immediate

);

create index emp_ht_emp_fk on emp_ht (deptno);

create index emp_ht_dept_fk on emp_ht (mgr);

insert into dept (deptno, dname, loc)

values (10, 'ACCOUNTING', 'NEW YORK'),

(20, 'RESEARCH', 'DALLAS'),

(30, 'SALES', 'CHICAGO'),

(40, 'OPERATIONS', 'BOSTON');

insert into dept_ht (deptno, dname, loc)

select deptno, dname, loc

from dept;

insert into emp (empno, ename, job, mgr, hiredate, sal, comm, deptno)

values (7566, 'JONES', 'MANAGER', 7839, date '1981-04-02', 2975, null, 20),

(7698, 'BLAKE', 'MANAGER', 7839, date '1981-05-01', 2850, null, 30),

(7782, 'CLARK', 'MANAGER', 7839, date '1981-06-09', 2450, null, 10),

(7788, 'SCOTT', 'ANALYST', 7566, date '1987-04-19', 3000, null, 20),

(7902, 'FORD', 'ANALYST', 7566, date '1981-12-03', 3000, null, 20),

(7499, 'ALLEN', 'SALESMAN', 7698, date '1981-02-20', 1600, 300, 30),

(7521, 'WARD', 'SALESMAN', 7698, date '1981-02-22', 1250, 500, 30),

(7654, 'MARTIN', 'SALESMAN', 7698, date '1981-09-28', 1250, 1400, 30),

(7844, 'TURNER', 'SALESMAN', 7698, date '1981-09-08', 1500, 0, 30),

(7900, 'JAMES', 'CLERK', 7698, date '1981-12-03', 950, null, 30),

(7934, 'MILLER', 'CLERK', 7782, date '1982-01-23', 1300, null, 10),

(7369, 'SMITH', 'CLERK', 7902, date '1980-12-17', 800, null, 20),

(7839, 'KING', 'PRESIDENT', null, date '1981-11-17', 5000, null, 10),

(7876, 'ADAMS', 'CLERK', 7788, date '1987-05-23', 1100, null, 20);

insert into emp_ht (empno, ename, job, mgr, hiredate, sal, comm, deptno)

select empno, ename, job, mgr, hiredate, sal, comm, deptno

from emp;

commit;Table DEPT created.

Table DEPT_HT created.

Index DEPT_HT_DEPT_FK_I created.

Table EMP created.

Index EMP_DEPT_FK_I created.

Index EMP_EMP_FK_I created.

Table EMP_HT created.

Index EMP_HT_EMP_FK created.

Index EMP_HT_DEPT_FK created.

4 rows inserted.

4 rows inserted.

14 rows inserted.

14 rows inserted.

Commit complete.Prohibit Overlapping Periods

Chris Saxon explains in this blog post how to prevent overlapping time ranges with SQL assertions. We use a similar approach here for dept_ht.

create assertion if not exists no_overlapping_dept_ht_vt_period_as

check (

not exists (

select 'a department version (d1)'

from dept_ht d1

where exists (

select 'an overlapping department version (d2)'

from dept_ht d2

where d2.deptno = d1.deptno

and d2.hist_id != d1.hist_id

and (d1.vt_start is null or d2.vt_end is null or d1.vt_start < d2.vt_end)

and (d2.vt_start is null or d1.vt_end is null or d2.vt_start < d1.vt_end)

)

)

) deferrable initially immediate;Assertion NO_OVERLAPPING_DEPT_HT_VT_PERIOD_AS created.Let’s test the assertion. We defer all constraints to make the example easier to implement and read.

set constraints all deferred;

delete from dept_ht where deptno = 10;

insert into dept_ht (deptno, dname, loc, vt_start, vt_end)

values (10, 'ACCOUNTING', 'NEW_YORK', null, date '2026-04-01'),

(10, 'ACCOUNTING', 'WINTERTHUR', date '2026-03-01', null );

commit;Constraints ALL succeeded.

1 row deleted.

2 rows inserted.

Error starting at line : 6 in command -

commit

Error report -

ORA-02091: transaction rolled back

ORA-08601: SQL assertion (DEMO.NO_OVERLAPPING_DEPT_HT_VT_PERIOD_AS) violated.

https://docs.oracle.com/error-help/db/ora-02091/

02091. 00000 - "transaction rolled back"

*Cause: Also see error 2092. If the transaction is aborted at a remote

site then you will only see 2091; if aborted at host then you will

see 2092 and 2091.

*Action: Add rollback segment and retry the transaction.The test fails at line 6, at the commit statement, exactly as expected.

Let’s insert two adjacent (gapless) periods.

set constraints all deferred;

delete from dept_ht where deptno = 10;

insert into dept_ht (deptno, dname, loc, vt_start, vt_end)

values (10, 'ACCOUNTING', 'NEW_YORK', null, date '2026-04-01'),

(10, 'ACCOUNTING', 'WINTERTHUR', date '2026-04-01', null );

commit;Constraints ALL succeeded.

1 row deleted.

2 rows inserted.

Commit complete.The test succeeds. So far so good.

However, this change leaves the redundant current-state copy in dept out of sync. After any change to dept_ht, the API should execute a statement like the following within the same transaction:

update dept d

set d.dname = ht.dname,

d.loc = ht.loc

from (

select deptno, dname, loc

from dept_ht

where deptno = 10

order by vt_start desc nulls first

fetch first 1 row only

) ht

where d.deptno = ht.deptno;1 row updated.Of course, we would need a similar SQL assertion for the period in emp_ht. However, this is not essential for this blog post. Therefore, we will skip it.

Temporal Foreign Keys

Let’s now look at another typical problem in temporal data models: Temporal foreign keys. We have two temporal foreign keys in our model:

emp_ht_emp_mgr_fkemp_ht_dept_fk

Although the foreign key technically references a non-temporal table, the business rule is temporal: the referenced entity must be valid throughout the relevant period of the referencing row.

In this example, that means a manager must exist for the entire validity period of the employee version.

Sounds complicated? Let’s look at an example based on emp_ht_emp_mgr_fk.

update emp_ht

set vt_start = hiredate;

select e.vt_start, e.vt_end, e.empno, e.ename, e.mgr, m.ename as mgr_name, m.hiredate as mgr_hiredate,

e.mgr is not null and hm.empno is null as is_inconsistent

from emp_ht e

left join emp m

on m.empno = e.mgr

left join emp_ht hm

on hm.empno = e.mgr

and (hm.vt_start is null or (e.vt_start is not null and hm.vt_start <= e.vt_start))

and (hm.vt_end is null or e.vt_start is null or hm.vt_end > e.vt_start)

order by e.empno;

rollback;14 rows updated.

VT_START VT_END EMPNO ENAME MGR MGR_NAME MGR_HIREDATE IS_INCONSISTENT

---------- ---------- ---------- ---------- ---------- ---------- ------------ ---------------

1980-12-17 7369 SMITH 7902 FORD 1981-12-03 true

1981-02-20 7499 ALLEN 7698 BLAKE 1981-05-01 true

1981-02-22 7521 WARD 7698 BLAKE 1981-05-01 true

1981-04-02 7566 JONES 7839 KING 1981-11-17 true

1981-09-28 7654 MARTIN 7698 BLAKE 1981-05-01 false

1981-05-01 7698 BLAKE 7839 KING 1981-11-17 true

1981-06-09 7782 CLARK 7839 KING 1981-11-17 true

1987-04-19 7788 SCOTT 7566 JONES 1981-04-02 false

1981-11-17 7839 KING false

1981-09-08 7844 TURNER 7698 BLAKE 1981-05-01 false

1987-05-23 7876 ADAMS 7788 SCOTT 1987-04-19 false

1981-12-03 7900 JAMES 7698 BLAKE 1981-05-01 false

1981-12-03 7902 FORD 7566 JONES 1981-04-02 false

1982-01-23 7934 MILLER 7782 CLARK 1981-06-09 false

14 rows selected.

Rollback complete.First, we set the start of the valid period for all employees according to their hire date.

Then, we queried the employees and their managers at the start of their valid time period. Employees without a manager at that time are marked with true in the result column is_inconsistent.

CLARK, for example, was hired on 9 June 1981. His manager, KING, was hired on 17 November 1981. Consequently, CLARK had no assigned manager between 9 June 1981 and 17 November 1981. This is an invalid temporal foreign key. To resolve this issue, we must either assign CLARK a manager for this period or amend KING’s valid time period, which would likely require adjusting his hire date as well.

Valid Manager At the Start of a Period

Let’s implement the check in the previous query as an SQL assertion.

create assertion if not exists cover_vt_start_in_emp_ht_emp_mgr_fk_as

check (

not exists (

select 'an employee version (e)'

from emp_ht e

where e.mgr is not null

and not exists (

select 'a manager version (m) covering e.vt_start'

from emp_ht m

where m.empno = e.mgr

and (m.vt_start is null or (e.vt_start is not null and m.vt_start <= e.vt_start))

and (m.vt_end is null or e.vt_start is null or m.vt_end > e.vt_start)

)

)

) deferrable initially immediate;Assertion COVER_VT_START_IN_EMP_HT_EMP_MGR_FK_AS created.Let’s try to create a gap in manager coverage by setting the vt_start to a non-null value for a manager.

update emp_ht

set vt_start = hiredate

where ename = 'KING';Error starting at line : 1 in command -

update emp_ht

set vt_start = hiredate

where ename = 'KING'

Error report -

ORA-08601: SQL assertion (DEMO.COVER_VT_START_IN_EMP_HT_EMP_MGR_FK_AS) violated.

https://docs.oracle.com/error-help/db/ora-08601/Good. The update fails because JONES, BLAKE and CLARK would have no manager assigned at the beginning of their valid time period.

Now let’s try to set vt_start for an employee who is not a manager.

update emp_ht

set vt_start = hiredate

where ename = 'ADAMS';

rollback;1 row updated.

Rollback complete.The update succeeded because ADAMS is not a manager and, therefore, no gap in manager coverage was created.

Valid Manager At the End of a Period

The previous SQL assertion ensures that manager coverage exists at the start of a period. However, to guarantee temporal data integrity for the entire period, we need an additional SQL assertion.

create assertion if not exists cover_vt_end_in_emp_ht_emp_mgr_fk_as

check (

not exists (

select 'an employee version (e)'

from emp_ht e

where e.mgr is not null

and exists (

select 'a manager version (m) ending before e.vt_end'

from emp_ht m

where m.empno = e.mgr

and m.vt_end is not null

and (e.vt_start is null or m.vt_end > e.vt_start)

and (e.vt_end is null or m.vt_end < e.vt_end)

and not exists (

select 'a manager version (m2) covering the m.vt_end'

from emp_ht m2

where m2.empno = e.mgr

and m2.hist_id != m.hist_id

and (m2.vt_start is null or m2.vt_start <= m.vt_end)

and (m2.vt_end is null or m2.vt_end > m.vt_end)

)

)

)

) deferrable initially immediate;Assertion COVER_VT_END_IN_EMP_HT_EMP_MGR_FK_AS created.Let’s try now to set the vt_end to a non-null value of a manager.

update emp_ht

set vt_end = date '2100-01-01'

where ename = 'KING';Error starting at line : 1 in command -

update emp_ht

set vt_end = date '2100-01-01'

where ename = 'KING'

Error at Command Line : 1 Column : 8

Error report -

SQL Error: ORA-08601: SQL assertion (DEMO.COVER_VT_END_IN_EMP_HT_EMP_MGR_FK_AS) violated.

https://docs.oracle.com/error-help/db/ora-08601/Good. The update failed because JONES, BLAKE and CLARK would have had no manager from 1 January 2100 until the end of time.

update emp_ht

set vt_end = date '2100-01-01'

where ename = 'ADAMS';

rollback;1 row updated.

Rollback complete.The update succeeded because ADAMS is not a manager and, therefore, no gap in manager coverage was created.

Well, those were just simple test cases. What would happen if we left a one-day gap between versions of the history? Would this be detected?

set constraints all deferred;

delete from emp_ht where ename = 'KING';

insert into emp_ht (vt_start, vt_end, empno, ename, job, hiredate, sal, deptno)

values (null, date '1981-11-17', 7839, 'KING', 'PRESIDENT', date '1981-11-17', 2000, 10),

(date '1981-11-17', date '2100-01-01', 7839, 'KING', 'PRESIDENT', date '1981-11-17', 4500, 10),

(date '2100-01-02', date '2200-01-01', 7839, 'KING', 'PRESIDENT', date '1981-11-17', 4600, 10),

(date '2200-01-01', null , 7839, 'KING', 'PRESIDENT', date '1981-11-17', 5000, 10);

commit;Constraints ALL succeeded.

1 row deleted.

4 rows inserted.

Error starting at line : 8 in command -

commit

Error report -

ORA-02091: transaction rolled back

ORA-08601: SQL assertion (DEMO.COVER_VT_END_IN_EMP_HT_EMP_MGR_FK_AS) violated.

https://docs.oracle.com/error-help/db/ora-02091/

02091. 00000 - "transaction rolled back"

*Cause: Also see error 2092. If the transaction is aborted at a remote

site then you will only see 2091; if aborted at host then you will

see 2092 and 2091.

*Action: Add rollback segment and retry the transaction.Yes! The commit statement failed because no version covered 1 January 2100.

When we fix the date on line 6, the transaction should succeed. Let’s try that.

set constraints all deferred;

delete from emp_ht where ename = 'KING';

insert into emp_ht (vt_start, vt_end, empno, ename, job, hiredate, sal, deptno)

values (null, date '1981-11-17', 7839, 'KING', 'PRESIDENT', date '1981-11-17', 2000, 10),

(date '1981-11-17', date '2100-01-01', 7839, 'KING', 'PRESIDENT', date '1981-11-17', 4500, 10),

(date '2100-01-01', date '2200-01-01', 7839, 'KING', 'PRESIDENT', date '1981-11-17', 4600, 10),

(date '2200-01-01', null , 7839, 'KING', 'PRESIDENT', date '1981-11-17', 5000, 10);

commit;Constraints ALL succeeded.

1 row deleted.

4 rows inserted.

Commit complete.Perfect. This works.

We have successfully enforced temporal referential integrity for the manager relationship using SQL assertions.

Summary

In this blog post, we created the SQL assertion no_overlapping_dept_ht_vt_period_as to ensure validity periods in the table dept_ht do not overlap.

We enforced temporal referential data integrity for the foreign key emp_ht_emp_mgr_fk using the SQL assertions cover_vt_start_in_emp_ht_emp_mgr_fk_as and cover_vt_end_in_emp_ht_emp_mgr_fk_as. This means that an employee always has a valid manager assigned throughout their validity period. Any gaps in manager coverage are detected correctly.

Adapting these SQL assertions for use in templates for temporal API generators should not be too difficult. All necessary information is in the Oracle data dictionary. With some additional metadata, we can generate additional SQL assertions for related, optional topics such as:

- Enforce adjacent periods (no gaps)

- Disallow adjacent periods with the same business values (enforce merge of periods)

- Disallow different business keys across periods (enforce business key updates on all versions)

- Disallow new versions for changes to non-versioned columns only (enforce update of all versions instead)

Although SQL assertions make it easier to enforce the integrity of temporal data, the use of temporal tables measurably increases complexity. Therefore, only use temporal tables if you really need to. Document which columns require temporal handling and explain why.

In any case, the integration of SQL assertions into Oracle AI Database makes the use of temporal tables much easier.

The post Using SQL Assertions to Enforce Temporal Data Integrity appeared first on Philipp Salvisberg's Blog.

]]>